College of Engineering Unit:

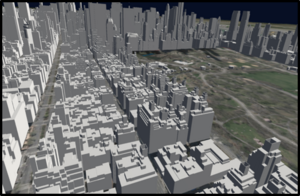

Our team’s project is based on taking real geospatial information on a region and its infrastructures and bringing it into a virtual reality environment to help aid in performing a damage assessment with AI assistance. This allows a user to visualize and interact with the environment. We start by processing the geospatial information in ArcGIS PRO by creating a scene layer package to be streamed to the Unity component. By using ArcGIS Developer to store and host the scene layer package we reduce the resources needed to host the data on our end. With the layer prepped we can finally bring that layer into our rendered environment in Unity using the ArcGIS MAPS SDK package. The rendering of the structures in the environment is dependent on the position and view direction of the user in Unity.

In the virtual reality environment, the user is provided the ability to teleport in the environment. We also provided the user the ability to move forward, backward, and left to right with the touchpad. The user can also change their altitude in the environment by simply using the other controller’s touchpad. Another option the user is provided is the ability to take a screenshot with the trigger button which is saved on the system end and then pipelined to the AI server for image recognition. Once the image has been processed by the AI server the user can then view the newly returned image by squeezing the controller’s side buttons. The image is then displayed directly in front of the user regardless of location in the environment. Squeezing the side buttons again simply makes the returned image disappear so the user can continue assessing the environment they are in. Another feature that was to be implemented was a WebRTC component. The fundamental idea was to allow the user to get near real-time feedback from the AI server on image recognition in the environment.